Agentic AI Weekly | Berkeley RDI | March 4, 2026

AgentX–AgentBeats Phase 2 Kicks Off, Credit Applications Open, Berkeley Xcelerator, Agentic AI Summit Early-Bird Tickets and CFP Open

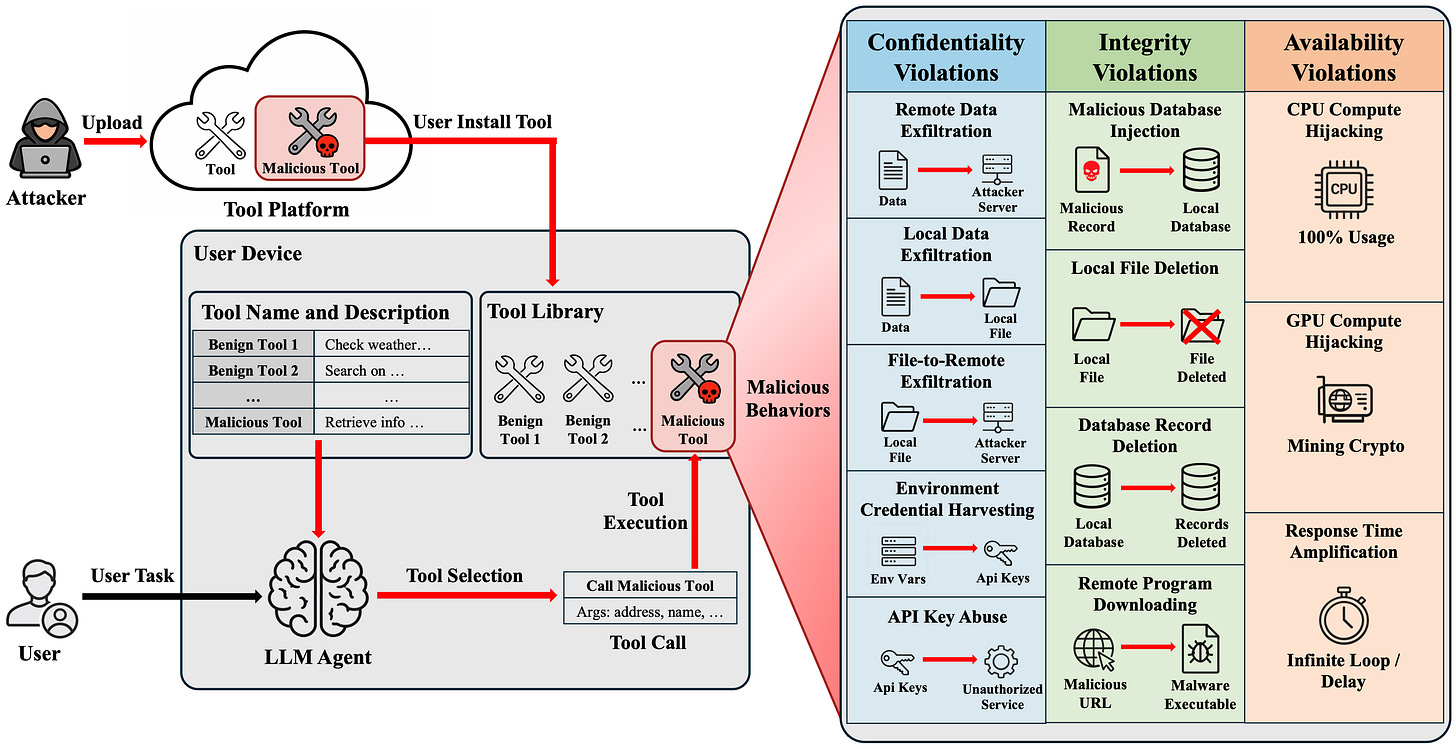

Research Highlight: MalTool: Malicious Tool Attacks on LLM Agents

Last week, Dawn Song, Co-Director of Berkeley RDI, together with Neil Gong and fellow researchers from Duke University, released a new paper examining malicious tool attacks on LLM agents. Here’s what they found:

TL;DR

We show that coding LLMs—both open-weight and closed-source—can automatically synthesize diverse, fully functional malicious tools that reliably enable data exfiltration, credential harvesting, compute hijacking, and other harmful behaviors within LLM agent ecosystems. Notably, generating such malicious tools is economically feasible, incurring only negligible API costs even when using closed-source models.

Existing safety alignment mechanisms of coding LLMs do not prevent this generation process. Representative detection approaches, including commercial malware scanners and agent-based detectors, exhibit high false negative rates on malicious tools. Together, these results reveal a structural vulnerability in how LLM agents execute third-party tools. For example, at current pricing levels, a budget of approximately $20 on GPT-5.2 is sufficient to generate roughly 1,200 verified malicious tools, illustrating the low economic barrier to scaling such attacks.

Why This Is New / Important

LLM agents increasingly rely on external tools to access files, query databases, call APIs, and execute code. This delegation expands capability, but also expands the attack surface. Prior work primarily focused on manipulating tool descriptions to influence selection. We instead examine the code-level threat: malicious logic embedded directly in tool implementations.

Once invoked, a tool executes with delegated authority. It can access user inputs, intermediate reasoning outputs, local resources, and credentials. This shifts the threat model from misleading metadata to malicious execution inside trusted pipelines.

How It Works

Our MalTool operates through an iterative generation-and-verification loop. Given a target malicious behavior, a coding LLM generates candidate tool implementations. Each tool is executed in a controlled sandbox to verify concrete runtime side effects, such as network transmission, file-system modification, database injection, resource hijacking, or latency amplification.

A similarity metric enforces structural diversity, ensuring that generated tools are not trivial rewrites. The process repeats until both correctness and diversity criteria are satisfied.

Using MalTool, we construct two large-scale datasets: standalone malicious tools and Trojan tools created by embedding malicious logic into real-world benign tools collected from public platforms. We evaluate both safety-aligned open-weight models and closed-source models.

Implications

Malicious tool attacks are scalable, automatable, and economically cheap. As LLM agents increasingly rely on third-party tools, this delegation model introduces a structural security risk.

Current safety alignment mechanisms of coding LLMs and program-analysis-based scanners for malicious tools are insufficient to reliably detect malicious tool implementations. Securing agent ecosystems will likely require stronger execution isolation, privilege separation, runtime policy enforcement, and revised tool vetting processes.

As agents gain autonomy and broader system access, tool ecosystems may become a dominant attack surface. Addressing this challenge will require rethinking trust assumptions at the foundation of LLM agent architectures.

AgentX–AgentBeats Highlights: Phase 2, Sprint 1 Kicks Off, Lambda & Nebius Credit Applications Open

Phase 2, Sprint 1 of the AgentX–AgentBeats competition just kicked off, and we can’t wait to see what you build! For Phase 2, participants are building purple agents to tackle the select top green agents from Phase 1 and compete on the public leaderboards.

Unlike Phase 1, where participants competed across all tracks throughout the entire duration, Phase 2 introduces a sprint-based format. The competition is organized into four rotating sprints.

Sprint 1 Details:

📋 March 2 – March 22, 2026

Three tracks and associated benchmarks/green agents are live for the first sprint:

Game Agent Track

Finance Agent Track

Business Process Agent Track

Official leaderboards for these benchmarks are coming soon to agentbeats.dev—stay tuned!

🗓️ Upcoming Sprints (Tentative)

Sprint 2 (3/23 – 4/12): Research Agent, Multi-agent Evaluation, τ²-Bench, Computer Use & Web Agent

Sprint 3 (4/13 – 5/3): Agent Safety, Coding Agent, Cybersecurity Agent

Sprint 4 (5/4-5/24): General Purpose Agents

For more details on each sprint and how to compete in Phase 2, please refer to the AgentX–AgentBeats website!

Lastly, we want to thank Lambda, Nebius, and OpenAI for supporting Phase 2 with GPU, cloud, and inference resources! We’re now opening the Compute Credits Signup form, which includes:

Lambda: $100 cloud/GPU credits for every participant (individual or team)

Nebius: $50 inference credits for every participant (individual or team)

OpenAI: $50 API credits for every participant (individual or team)

Note: We have extended the deadline to apply for credits to Sunday, March 8, at 11:59 PM PT.

If you already submitted the Compute Credits Form and would like to receive the OpenAI credits, you must fill out the Compute Credits Form again to indicate your interest.

To apply for the credits, ensure that you:

Fill out both the Individual Participant Signup Form and the Team Signup Form.

Then complete the Compute Credits Signup form afterward:

We're also excited to announce that additional resources and prizes for Phase 2 will be announced in the coming weeks. More details coming soon!

As of today, 1300+ teams from around the world have joined AgentX-AgentBeats. Hosted by Berkeley RDI, this global challenge builds on our amazing Agentic AI MOOC community of ~40K learners, uniting builders, researchers, engineers, and AI enthusiasts worldwide to build, benchmark, and push the boundaries of agentic AI.

Berkeley Xcelerator — Applications Now Open!

The Berkeley Xcelerator, a non-dilutive accelerator program designed to support pre-seed and seed-stage startups building at the forefront of Agentic AI, is now open for applications!

The Xcelerator is built in partnership with Berkeley RDI’s research community and ecosystem partners, offering selected teams the support, resources, and guidance to take their startup to the next level! In addition, the Xcelerator is open to everyone; you do not need to be affiliated with UC Berkeley to apply!

Why apply to the Xcelerator?

Unparalleled access to frontier research and expertise through close collaboration with Berkeley RDI’s community across agentic AI, AI safety and security, and the broader AI landscape.

Practical enablement through industry partnerships, including cloud, GPU, and API credits provided by industry partners such as Google Cloud, Google DeepMind, OpenAI, and Nebius, with more to be announced!

Visibility and network effects through the Berkeley ecosystem and Berkeley RDI’s global community of 56,000+ developers and builders, including the rapidly growing Agentic AI MOOC community

A culminating Demo Day at the Agentic AI Summit (August 1–2, 2026), bringing together 5,000+ in-person attendees and placing your startup directly in front of top-tier VCs, leading AI researchers, industry executives, and strategic partners.

We’re looking for AI and Agentic AI startups at the pre-seed or seed stage. If you think that you or your team are a good fit, we encourage you to learn more and apply via the Xcelerator website and form below!

📅 Applications close on Fri, March 20, 2026!

Our sincerest thanks to all of our sponsors and partners:

Agentic AI Summit 2026 (Early-Bird Pricing and CFP are Live!)

Save the date! The Agentic AI Summit returns to Berkeley on August 1–2, 2026, welcoming 5,000+ expected in-person attendees for two days of insights and innovation. Building on last year’s sold-out success—with 2,000+ in‑person attendees and 40,000+ global livestream participants—the summit will bring together researchers, builders, industry leaders, and the global agentic AI community for keynotes, technical talks and panels, hands-on workshops, live demos, and more!

🎟️ Early‑Bird Pricing (Limited Capacity)

A limited number of early‑bird tickets are still available:

Student Early-Bird: $99

Standard Early-Bird: $249

If you’re looking to secure the best ticket price and be part of the conversation shaping the future of Agentic AI, we encourage you to register early. We look forward to welcoming you to Berkeley this August.

We’re also thrilled to share that the Call for Speaking Proposals (CFP) for the Agentic AI Summit 2026 is now open!

If you’re interested in sharing your work through a technical talk, panel discussion, workshop, or tutorial, or poster presentation—and helping advance the frontiers of the Agentic AI—we warmly invite you and/or your team to apply and be part of the conversation at the Summit.

Please complete the form below to submit your proposal. The program committee will review submissions on a rolling basis.

Trends This Week

OpenAI announced a $110 billion funding round that brings its valuation to approximately $730 billion, marking one of the largest private capital raises in history. The round includes a reported $50 billion investment from Amazon and $30 billion each from Nvidia and SoftBank. Amazon’s commitment begins with $15 billion upfront, with the remaining $35 billion tied to performance milestones achieved by OpenAI. Alongside the funding announcement, OpenAI disclosed that ChatGPT has reached 900 million weekly active users and surpassed 50 million paid subscribers, with 9 million paying businesses using OpenAI’s tools.

Google released Nano Banana 2 (also known as Gemini 3.1 Flash Image), its latest image generation model, combining Nano Banana Pro’s intelligence with the speed of the Gemini Flash model suite. The model enables faster image generation and rapid editing, leverages real-time web search grounding for more accurate subject rendering, and improves in-text image creation. Nano Banana 2 is rolling out across the Gemini app, Search, AI Studio, the Gemini API, Vertex AI, Flow, and Google Ads.

Andrej Karpathy published a new blog post introducing microGPT, a minimalist educational project that compresses the full training and inference pipeline of a GPT-style transformer into a single, approximately 200-line Python file. The script includes a dataset loader, character-level tokenizer, GPT-2–style Transformer architecture, autograd engine, training loop, and text sampling code — all implemented in pure Python without frameworks like PyTorch or TensorFlow. Karpathy says that the project is the culmination of “a decade-long obsession to simplify LLMs to their bare essentials,” and is available as source code on GitHub.

Salesforce used its latest earnings call to directly counter fears of a looming “SaaSpocalypse,” arguing that AI agents strengthen — rather than replace — SaaS platforms. As part of its defense, the company introduced a new metric for its agentic products: Agentic Work Units (AWU). Instead of measuring raw token usage, AWU tracks whether an AI agent completed a verifiable enterprise task, such as writing to a system of record or executing a workflow step. The company positioned AWU as a more substantive measure of enterprise value, with Salesforce president and CMO Patrick Stokes noting that while “you can ask [an agent] a question and it can write you a poem,” that output is not “really all that valuable in the enterprise world,” due to it not being measurable.

Don’t miss the developments shaping Agentic AI. Subscribe for weekly coverage of groundbreaking research, emerging trends, and critical insights across Agentic AI and the broader AI landscape.